Preconfigured templates, Auto Assignment of Class & Characteristics, ISO 8000 and UNSPSC compliant

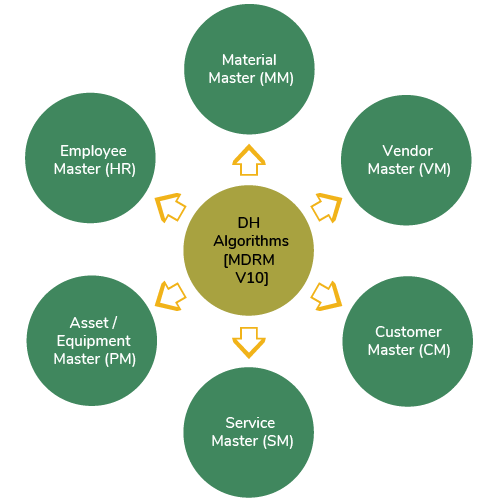

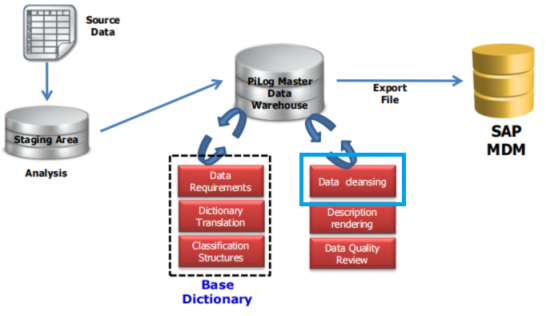

Data Quality Management is aimed to automate the process of standardization, cleansing & management of unstructured/free text data by utilizing ASA (Auto Structured Algorithms) built on PiLog’s taxonomy and the catalog repositories of master data records.

Data Quality Management Capabilities includes but not limited to

| Criteria | MM | VM | CM | SM | PM | HR |

|---|---|---|---|---|---|---|

| Count | 2,182 Classes Completed | - | - | - | 2K+ Classes Completed | - |

| Validation | ERP Fields validation | General, Taxation, Banking Details Validation | General, Taxation, Banking Details Validation | ERP Fields validation | ERP Fields are validated | Address Fields Validation |

| Verification | ERP Fields verification | General, Taxation, Banking Details Verification | General, Taxation, Banking Details Verification | ERP Fields verification | ERP Fields verification | Address Fields Verification |

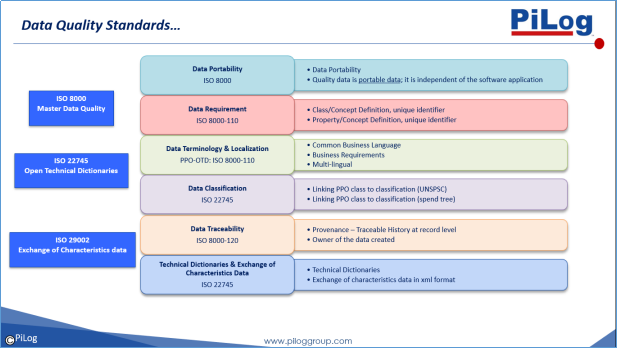

The International Organization for Standardization (ISO) approved a set of standards for data quality as it relates to the exchange of master data between organizations and systems. These are primarily defined in the ISO 8000-110, -120, -130, -140 and the ISO 22745-10, -30 and -40 standards. Although these standards were originally inspired by the business of replacement parts cataloguing, the standards potentially have a much broader application. The ISO 8000 standards are high level requirements that do not prescribe any specific syntax or semantics. On the other hand, the ISO 22745 standards are for a specific implementation of the ISO 8000 standards in extensible markup language (XML) and are aimed primarily at parts cataloguing and industrial suppliers

PiLog Data Harmonization processes & methodologies complies to ISO 8000 & ISO 22745 standards

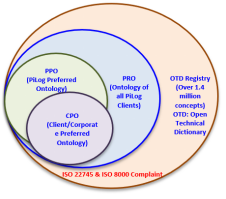

PiLog utilizes the PiLog Preferred Ontology (PPO) when structuring and cleansing Material, Asset/Equipment & Services Master records ensuring data deliverables comply with the ISO 8000 methodology, processes & standards for Syntax, Semantics, Accuracy, Provenance and Completeness

PiLog throughout the 25 years of experience in master data solutions across different industries have developed PiLog Preferred Ontology (PPO) which is a Technical Dictionary that complies with the ISO 8000 standard. The PPO is a best-defined industry specific dictionary covering all industry verticals such as Petrochemical, Iron & Steel, Oil & Gas, Cement, Transport, Utilities, Retail etc.

PiLog's Taxonomy consists of pre-defined templates. Each template consists of a list of classes (object-qualifier or noun-modifier combination) with a set of predefined characteristics (properties/attributes) per class. PiLog will make the PPO (class/characteristics/abbreviations) available for general reference via the Data Harmonization Solution (DHS) and Master Data Ontology Manager (MDOM) tools.

PiLog creates client preferred ontology (CPO) by copying general templates common to most companies/industries, as well as known, expected templates for the specific client. The Client team will confirm the CPO templates by approving:

PiLog reserves the right to make these changes in the dictionary:

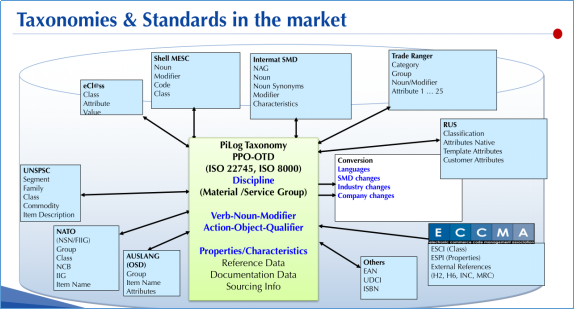

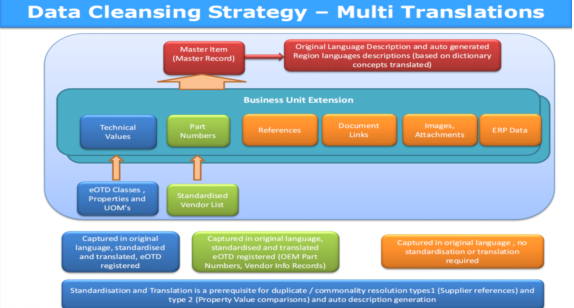

The PiLog Master Data Project Management is used for cleansing and structuring of a material master is a highly specialized field, requiring the use of international standards, such as eOTD, USC, EAN, ISO 8000 etc.

Effective cleansing and structuring of a material master consistent and correct application of these standards in large volumes of data requires specialized processes, methodologies and software tools.

The material master forms the basis for a myriad of business objectives. PiLog understands the complex task of translating selected business objectives into master data requirements and subsequently designing a project that is focused on delivering optimal results in a cost effective way.

For a large number of line items effective cleaning of the material master does require the cleaning and standardization of the manufacturers and/or suppliers. It therefore follows that a vendor/supplier cleanup and standardization is a logical consequence in the process

PiLog has its own specialized data refinery, PiLog Data. PiLog has developed superior technology and methodologies that are aimed at delivering the best possible quality, consistently and cost effectively.

In answering the market need for the cataloguing of services in a consistent and repetitive manner, PiLog developed the world's first internationally proven standard for services cataloguing, the USC. Although this has now been accepted to be part of the eOTD, the specific methodologies required to implement it successfully remains with PiLog.

The material master, as well as other master data tables, requires standardized base tables for, amongst others; unit of measure, unit of purchase, material types and material groups. This is also a specialty of PiLog.

Data cataloging is classified below methods:

Basic Structuring (Reference data extraction & Allocation of a PPO-OTD class)-

Advanced Structuring (Value extraction)

Enrichment-

PiLog follows three cataloging rules as follows: